How to setup Rate Limiting for 2-Factor Authentication | WordPress

Contents

How to setup Rate Limiting for 2-Factor Authentication | WordPress

Rate limiting is a method to improve network security by limiting network traffic. It limits how many times someone can repeat an action in a given interval, such as trying to repeatedly ping a website resource. Rate limiting can help prevent malicious bot activity at the same time help reduce the load on web servers.

Follow these steps to setup Rate Limiting:

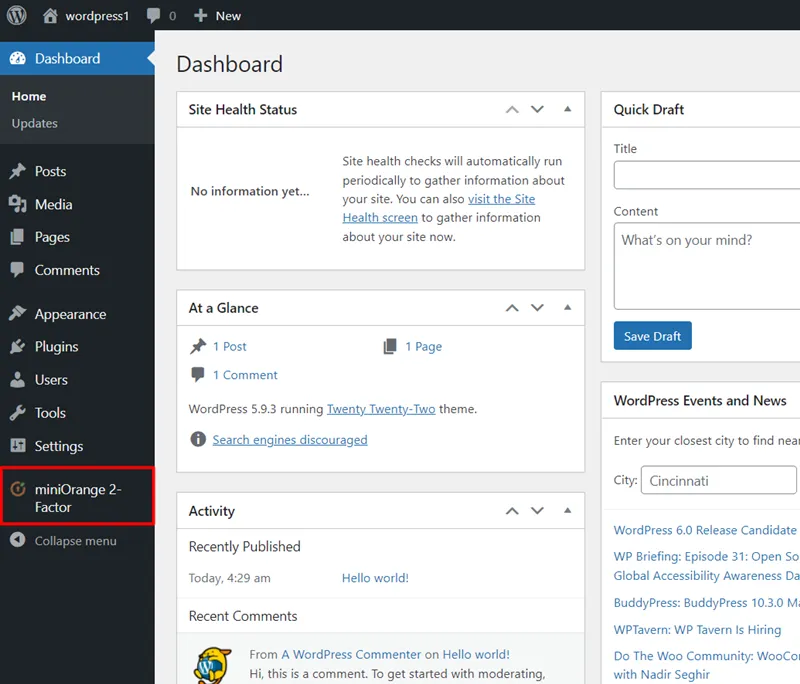

- Click on the miniorange 2-Factor plugin from the left side menu.

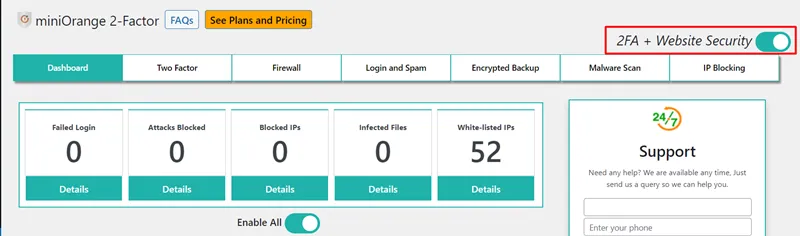

- Enable 2FA+Website Security toggle button.

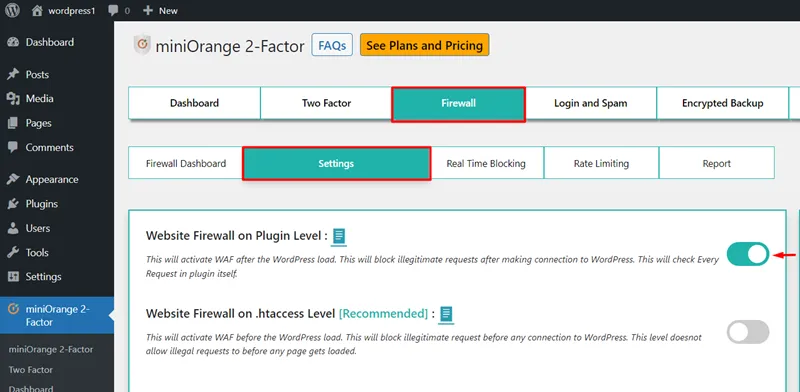

- Go to the Firewall tab and click on the Settings button.

- Navigate Website Firewall on plugin level option and enable the toggle button.

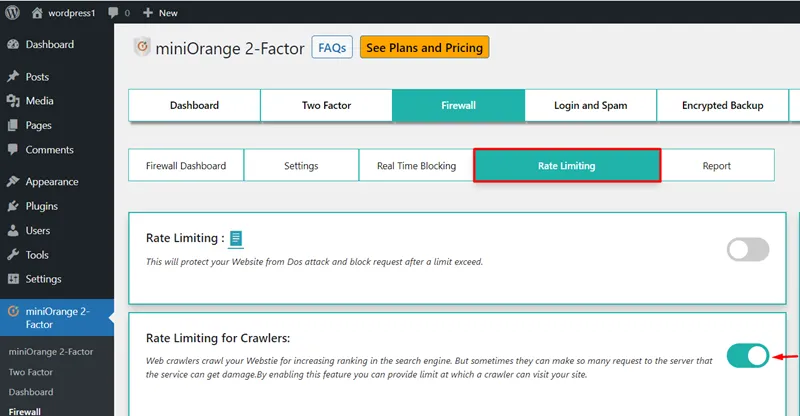

- Click on the Rate Limiting button.

- Navigate to the Rate Limiting for Crawlers feature and enable the toggle button.

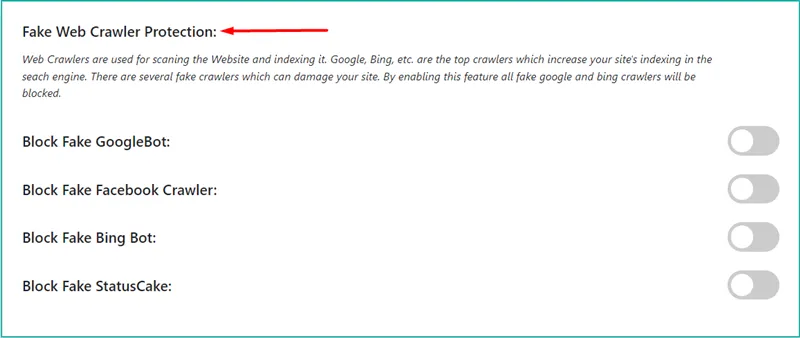

- Navigate to the Fake Web Crawler Protection feature.

- You can block the crawler by enabling the toggle button of the fake crawler as shown in the image below.

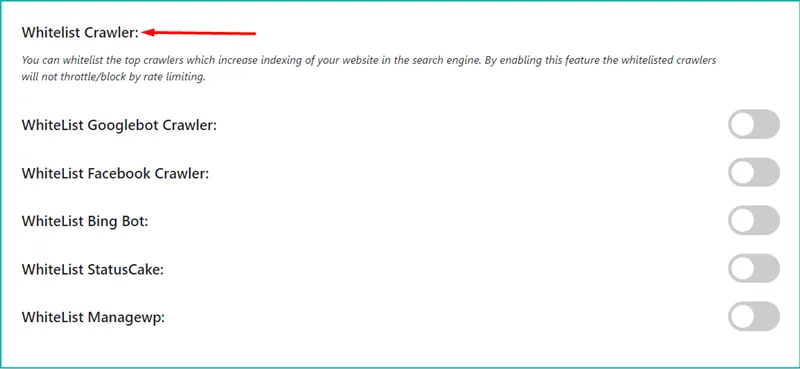

- Navigate to the Whitelist Crawler feature.

- You can whitelist the crawler by enabling the toggle button of the whitelist crawler as shown in the image below.

1. Rate Limiting for Crawlers-

Crawlers crawl your website to improve its search engine ranking. However, they can sometimes send so many queries to the server that can make the service unavailable due to excessive load. Hence, You can limit the number of times a crawler can access your site by using this feature.

2. Fake Web Crawlers Protection-

Web Crawlers scan and index websites. The best crawlers for increasing your site's indexing in search engines include google, bing, and others. There are a number of fake crawlers that can cause problems for your website. All fake Google and Bing crawlers will be blocked once this functionality is enabled.

3. Whitelist Crawler-

You can whitelist the top crawlers to improve your website's indexing in search engines. The whitelisted crawlers will not be blocked by rate limiting if this feature is enabled.

Business Trial For Free

If you are looking for anything which you cannot find, please drop us an email on 2fasupport@xecurify.com

Need Help? We are right here!

Thanks for your inquiry.

If you dont hear from us within 24 hours, please feel free to send a follow up email to info@xecurify.com

Cookie Preferences

Cookie Consent

This privacy statement applies to miniorange websites describing how we handle the personal information. When you visit any website, it may store or retrieve the information on your browser, mostly in the form of the cookies. This information might be about you, your preferences or your device and is mostly used to make the site work as you expect it to. The information does not directly identify you, but it can give you a more personalized web experience. Click on the category headings to check how we handle the cookies. For the privacy statement of our solutions you can refer to the privacy policy.

Strictly Necessary Cookies

Always Active

Necessary cookies help make a website fully usable by enabling the basic functions like site navigation, logging in, filling forms, etc. The cookies used for the functionality do not store any personal identifiable information. However, some parts of the website will not work properly without the cookies.

Performance Cookies

Always Active

These cookies only collect aggregated information about the traffic of the website including - visitors, sources, page clicks and views, etc. This allows us to know more about our most and least popular pages along with users' interaction on the actionable elements and hence letting us improve the performance of our website as well as our services.